Best Practices for KVM

KVM

Learn about the best practices for Kernel-based Virtual Machine (KVM), including device virtualization for guest operating systems, over-committing processor and memory resources, networking, and PCIe passthrough performance.

Creating VMs with virt-install

We need root privileges in order for virt-install commands to complete successfully.

By default, libvirt stores images in the /var/lib/libvirt/images directory. The root user owns the /var/lib/libvirt/images directory. Therefore, only the root user can create files, delete files, and view a listing of the files in the /var/lib/libvirt/images directory.

Do not edit qemu.conf to set 'root' - that means a compromise guest can own your entire host.

Using virt-install with Kickstart files allows for unattended installation of virtual machines.

###Forcing a vm to stop

virsh destroy vm1-centos7

Using virt-install to install a CentOS 7.x guest virtual machine

###Create an install shell script

cat > ./vm-centos7-install.sh << EOF

#CentOS 7

NAME=vm1-centos7

virt-install \

--name \$NAME \

--vcpus 1 \

--memory 1024 \

--disk path=/var/lib/libvirt/images/\$NAME.qcow2,size=8 \

--os-type linux \

--os-variant centos7.0 \

--network bridge=virbr0 \

--graphics none \

--console pty,target_type=serial \

--location 'http://free.nchc.org.tw/centos/7/os/x86_64/' \

--extra-args 'console=ttyS0,115200n8 serial ks=http://HostIP/centos7-ks.cfg'

EOF

chmod +x vm-centos7-install.sh && sudo ./vm-centos7-install.sh

Using virt-install to install a Ubuntu 16.04 guest virtual machine

###Create an install shell script

cat > ./vm-ubuntu1604-install.sh << EOF

#Ubuntu 16.04

NAME=vm1-ubuntu1604

virt-install \

--name \$NAME \

--vcpus 1 \

--memory 1024 \

--disk path=/var/lib/libvirt/images/\$NAME.qcow2,size=8 \

--os-type linux \

--os-variant ubuntu16.04 \

--network bridge=virbr0 \

--graphics none \

--console pty,target_type=serial \

--location 'http://free.nchc.org.tw/ubuntu/dists/xenial/main/installer-amd64/' \

--extra-args 'console=ttyS0,115200n8 serial ks=http://HostIP/ubuntu1604-ks.cfg'

EOF

chmod +x vm-ubuntu1604-install.sh && sudo ./vm-ubuntu1604-install.sh

Using virt-install to install a Debian 8 guest virtual machine

###Create an install shell script

cat > ./vm-debian8-install.sh << EOF

#Debian 8

NAME=vm1-debian8

virt-install \

--name \$NAME \

--vcpus 1 \

--memory 1024 \

--disk path=/var/lib/libvirt/images/\$NAME.qcow2,size=8 \

--os-type linux \

--os-variant debian8 \

--network bridge=virbr0 \

--graphics none \

--console pty,target_type=serial \

--location 'http://free.nchc.org.tw/debian/dists/jessie/main/installer-amd64/' \

--extra-args 'console=ttyS0,115200n8 serial auto=true hostname=localhost domain=localdomain url=http://HostIP/d8-preseed.cfg'

EOF

chmod +x vm-debian8-install.sh && sudo ./vm-debian8-install.sh

Importing VMs with virt-install

###Create an import shell script for an existing image disk

cat > ./vm-debian8-import.sh << EOF

#Debian 8

NAME=vm1-debian8

virt-install \

--name \$NAME \

--vcpus 1 \

--memory 1024 \

--disk path=/var/lib/libvirt/images/\$NAME.qcow2 \

--graphics none \

--console pty \

--import

EOF

chmod +x vm-debian8-import.sh && sudo ./vm-debian8-import.sh

SR-IOV and PCI Passthrough

SR-IOV is a virtualization technology that is beneficial for workloads with very high packet rates or very low latency requirements. When using SR-IOV, multiple guest machines can simultaneously and directly access the hardware device on the hypervisor host.

PCI Passthrough enables exclusive direct access to a physical network card. When compared to SR-IOV, only small performance increases can be gained by using PCI Passthrough.

Validate host virtualization setup

[stack@localhost ~]$ virt-host-validate

QEMU: Checking for hardware virtualization : PASS

QEMU: Checking if device /dev/kvm exists : PASS

QEMU: Checking if device /dev/kvm is accessible : PASS

QEMU: Checking if device /dev/vhost-net exists : PASS

QEMU: Checking if device /dev/net/tun exists : PASS

QEMU: Checking for cgroup 'memory' controller support : PASS

QEMU: Checking for cgroup 'memory' controller mount-point : PASS

QEMU: Checking for cgroup 'cpu' controller support : PASS

QEMU: Checking for cgroup 'cpu' controller mount-point : PASS

QEMU: Checking for cgroup 'cpuacct' controller support : PASS

QEMU: Checking for cgroup 'cpuacct' controller mount-point : PASS

QEMU: Checking for cgroup 'cpuset' controller support : PASS

QEMU: Checking for cgroup 'cpuset' controller mount-point : PASS

QEMU: Checking for cgroup 'devices' controller support : PASS

QEMU: Checking for cgroup 'devices' controller mount-point : PASS

QEMU: Checking for cgroup 'blkio' controller support : PASS

QEMU: Checking for cgroup 'blkio' controller mount-point : PASS

QEMU: Checking for device assignment IOMMU support : PASS

QEMU: Checking if IOMMU is enabled by kernel : WARN (IOMMU appears to be disabled in kernel. Add intel_iommu=on to kernel cmdline arguments)

LXC: Checking for Linux >= 2.6.26 : PASS

LXC: Checking for namespace ipc : PASS

LXC: Checking for namespace mnt : PASS

LXC: Checking for namespace pid : PASS

LXC: Checking for namespace uts : PASS

LXC: Checking for namespace net : PASS

LXC: Checking for namespace user : PASS

LXC: Checking for cgroup 'memory' controller support : PASS

LXC: Checking for cgroup 'memory' controller mount-point : PASS

LXC: Checking for cgroup 'cpu' controller support : PASS

LXC: Checking for cgroup 'cpu' controller mount-point : PASS

LXC: Checking for cgroup 'cpuacct' controller support : PASS

LXC: Checking for cgroup 'cpuacct' controller mount-point : PASS

LXC: Checking for cgroup 'cpuset' controller support : PASS

LXC: Checking for cgroup 'cpuset' controller mount-point : PASS

LXC: Checking for cgroup 'devices' controller support : PASS

LXC: Checking for cgroup 'devices' controller mount-point : PASS

LXC: Checking for cgroup 'blkio' controller support : PASS

LXC: Checking for cgroup 'blkio' controller mount-point : PASS

Enable IOMMU by kernel

###Enable IOMMU support in kernel command through GRUB: *iommu=pt* *intel_iommu=on*

sudo sed -i 's/GRUB_CMDLINE_LINUX=\"\(.*\)\"/GRUB_CMDLINE_LINUX=\"\1 iommu=pt intel_iommu=on\"/' /etc/default/grub

sudo grub2-mkconfig --output=/boot/grub2/grub.cfg

###reboot

sudo reboot

Physical NIC PCI Passthrough

###--hostdev, A node device name via libvirt, as shown by 'virsh nodedev-list'

#[stack@localhost ~]$ sudo lspci | grep -i ethernet

#04:00.0 Ethernet controller: Emulex Corporation OneConnect NIC (Skyhawk) (rev 10)

#04:00.1 Ethernet controller: Emulex Corporation OneConnect NIC (Skyhawk) (rev 10)

#04:00.2 Ethernet controller: Emulex Corporation OneConnect NIC (Skyhawk) (rev 10)

#04:00.3 Ethernet controller: Emulex Corporation OneConnect NIC (Skyhawk) (rev 10)

sudo virsh nodedev-list | grep pci_0000_04

pci_0000_04_00_0

pci_0000_04_00_1

pci_0000_04_00_2

pci_0000_04_00_3

--hostdev pci_0000_04_00_3

Node Device (help keyword 'nodedev')

nodedev-create create a device defined by an XML file on the node

nodedev-destroy destroy (stop) a device on the node

nodedev-detach detach node device from its device driver

nodedev-dumpxml node device details in XML

nodedev-list enumerate devices on this host

nodedev-reattach reattach node device to its device driver

nodedev-reset reset node device

###Create an install shell script with one Ethernet NIC passthrough into VM

cat > ./vm-centos7-install-pt.sh << EOF

#CentOS 7

NAME=vm2-centos7

NIC=\$1

virt-install \

--name \$NAME \

--vcpus 1 \

--memory 1024 \

--disk path=/var/lib/libvirt/images/\$NAME.qcow2,size=8 \

--os-type linux \

--os-variant centos7.0 \

--network bridge=virbr0 \

--hostdev \$NIC \

--graphics none \

--console pty,target_type=serial \

--location 'http://free.nchc.org.tw/centos/7/os/x86_64/' \

--extra-args 'console=ttyS0,115200n8 serial ks=http://HostIP/centos7-ks.cfg'

EOF

chmod +x vm-centos7-install-pt.sh && sudo ./vm-centos7-install-pt.sh pci_0000_04_00_3

Virtual Networking - libvirt domain xml file for VMs

sudo virsh net-list --all

Name State Autostart Persistent

----------------------------------------------------------

default active yes yes

$ cat /usr/share/libvirt/networks/default.xml

<network>

<name>default</name>

<bridge name="virbr0"/>

<forward/>

<ip address="192.168.122.1" netmask="255.255.255.0">

<dhcp>

<range start="192.168.122.2" end="192.168.122.254"/>

</dhcp>

</ip>

</network>

Create a new libvirt network

- Create a xml definition file(e.g. internal_intranet.xml) for the intranet network, bridge is named by you.

<network>

<name>intranet</name>

<bridge name="intranet-br0"/>

<forward/>

<ip address="10.0.0.1" netmask="255.255.255.0">

<dhcp>

<range start="192.168.122.2" end="192.168.122.254"/>

</dhcp>

</ip>

</network>

- Now that the xml file has been created, we can use virsh net-define to define it.

$ sudo virsh net-define internal_intranet.xml

Network intranet defined from internal_intranet.xml

# virsh net-list --all

Name State Autostart

-----------------------------------------

default active yes

intranet inactive no

# virsh net-start intranet

Network intranet started

# virsh net-autostart intranet

Network intranet marked as autostarted

# virsh net-list

Name State Autostart

-----------------------------------------

default active yes

intranet active yes

- PCI Device SR-IOV Passthrough with proper PCI bus info

Add a

definition to the section, using bus, slot, and function attribute values identified in lspci command. For example:

$ lspci | grep -i ethernet

06:00.0 Ethernet controller: Intel Corporation I350 Gigabit Network Connection (rev 01)

06:00.1 Ethernet controller: Intel Corporation I350 Gigabit Network Connection (rev 01)

06:00.2 Ethernet controller: Intel Corporation I350 Gigabit Network Connection (rev 01)

06:00.3 Ethernet controller: Intel Corporation I350 Gigabit Network Connection (rev 01)

<devices>

...

<hostdev mode='subsystem' type='pci' managed='yes'>

<source>

<address domain='0x0000' bus='0x06' slot='0x00' function='0x0'/>

</source>

</hostdev>

</devices>

</pre>

ProTip: MAC address is optional and will be automatically generated if omitted.

- default NAT mode with existing connected network interface

<interface type='network'>

<mac address='52:54:00:f3:79:c0'/>

<source network='default'/>

<model type='virtio'/>

</interface>

- Bridge mode with eno1 physical interface

<interface type='direct'>

<mac address='52:54:00:f3:79:c0'/>

<source dev='eno1' mode='bridge'/>

<model type='virtio'/>

</interface>

- Passthrough mode with eno1 physical interface

<interface type='direct'>

<mac address='52:54:00:f3:79:c0'/>

<source dev='eno1' mode='passthrough'/>

<model type='virtio'/>

</interface>

- OVS bridge mode with virtio virtual NIC

<interface type='bridge'>

<source bridge='ovsbr0'/>

<virtualport type='openvswitch' />

<model type='virtio'/>

</interface>

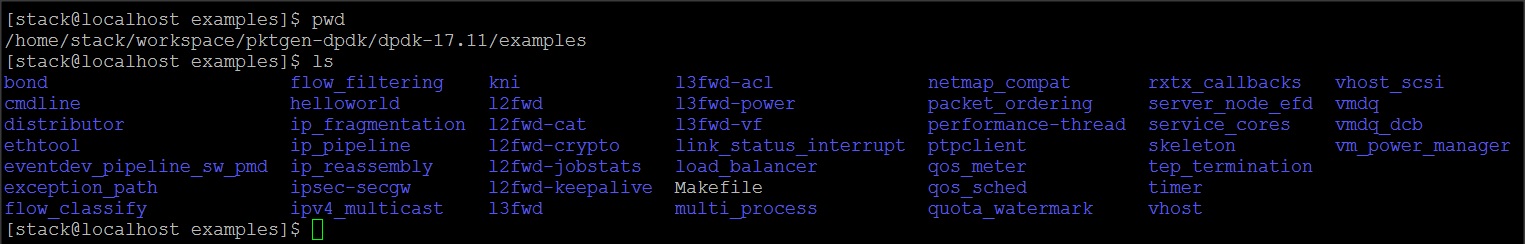

DPDK and PKTGEN

###CentOS 7

sudo yum -y install epel-release

sudo yum groupinstall "Development Tools"

sudo yum -y install "kernel-devel-uname-r == $(uname -r)" libpcap-devel numactl-devel

###Download pktgen-dpdk and dpdk Package

mkdir -p workspace/pktgen-dpdk && cd workspace/pktgen-dpdk

wget http://dpdk.org/browse/apps/pktgen-dpdk/snapshot/pktgen-3.4.9.tar.xz

wget https://fast.dpdk.org/rel/dpdk-17.11.tar.xz

###Extract sources and Export environment variables

tar -Jxvf pktgen-3.4.9.tar.xz

tar -Jxvf dpdk-17.11.tar.xz

cd dpdk-17.11

export RTE_SDK=$(pwd)

export RTE_TARGET=x86_64-native-linuxapp-gcc

###Enable pcap (libpcap headers are required)

make config T=x86_64-native-linuxapp-gcc

sed -ri 's,(PMD_PCAP=).*,\1y,' build/.config

###Build and Install DPDK

make install T=x86_64-native-linuxapp-gcc

......

......

== Build app/test-eventdev

CC evt_main.o

CC evt_options.o

CC evt_test.o

CC parser.o

CC test_order_common.o

CC test_order_queue.o

CC test_order_atq.o

CC test_perf_common.o

CC test_perf_queue.o

CC test_perf_atq.o

LD dpdk-test-eventdev

INSTALL-APP dpdk-test-eventdev

INSTALL-MAP dpdk-test-eventdev.map

Build complete [x86_64-native-linuxapp-gcc]

Installation cannot run with T defined and DESTDIR undefined

###Build pktgen

cd ../pktgen-3.4.9

make

- 00_pktgen-dpdk-setup.sh

cat > 00_pktgen-dpdk-setup.sh << EOF

#!/bin/bash

bash -c "echo 1024 > /sys/kernel/mm/hugepages/hugepages-2048kB/nr_hugepages"

mkdir /mnt/huge

mount -t hugetlbfs nodev /mnt/huge

modprobe uio

insmod ../dpdk-2.2.0/x86_64-native-linuxapp-gcc/kmod/igb_uio.ko

../dpdk-2.2.0/tools/dpdk_nic_bind.py -b igb_uio 08:00.0 08:00.1

EOF

chmod 755 00_pktgen-dpdk-setup.sh && sudo ./00_pktgen-dpdk-setup.sh

- 01_pktgen-dpdk-run.sh

cat > 01_pktgen-dpdk-run.sh << EOF

#!/bin/bash

./app/app/x86_64-native-linuxapp-gcc/app/pktgen -c 0x1f -n 3 --proc-type auto --socket-mem 256 --file-prefix pg -- -p 0x03 -P -m "[1:3].0, [2:4].1"

EOF

chmod 755 01_pktgen-dpdk-run.sh && sudo ./01_pktgen-dpdk-run.sh

DPDK Examples

Leave a Comment